CEVA Acquires Spatial Audio Business from VisiSonics to Expand its Application Software Portfolio for Embedded Systems targeting Hearables and other Consumer IoT Markets

ROCKVILLE, Md., May 10, 2023 /PRNewswire/ — CEVA, Inc. (NASDAQ: CEVA), the leading licensor of wireless connectivity and smart sensing technologies and custom SoC solutions, today announced the acquisition of the RealSpace® 3D Spatial Audio business, technology and patents from VisiSonics Corporation. Based in Maryland, close to CEVA’s sensor fusion R&D development center, the VisiSonics spatial audio R&D team and software extend the Company’s application software portfolio for embedded systems, bolstering CEVA’s strong market position in hearables, where spatial audio is fast becoming a must-have feature.

Spatial audio will also drive innovation in many other end markets, including gaming, AR/VR, audio conferencing, healthcare, automotive and media entertainment, all which CEVA can further address following this acquisition. Future Market Insights estimates that the market for 3D audio will grow 4.1X from 2022 to 2032, reaching nearly $31.9 Billion in 2032.

Amir Panush, CEO of CEVA, commented: “The acquisition of the VisiSonics’ spatial audio software and business builds on an already strong relationship and allows us to better serve OEMs who wish to enhance the audio experience of their products with a best-in-class immersive spatial audio solution with such world-class providers as THX Ltd. The software is market-proven with industry leaders in gaming and hearables and presents us with an opportunity to expand our customer base by delivering on the true potential of this technology, including entry into the burgeoning consumer IoT and automotive markets.”

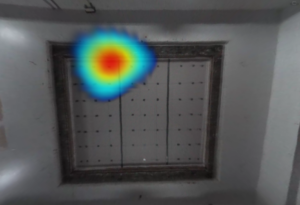

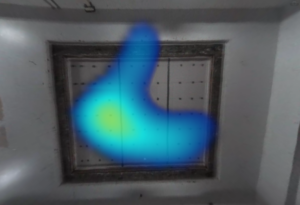

RealSpace spatial audio rendering software provides the most accurate digital simulation of real-life, immersive sound in the industry. Its proprietary algorithms create a realistic aural experience through as little as two-channel stereo audio while supporting full multi-channel and ambisonics. With dynamic head tracking enabled, the RealSpace user experience is even more immersive, as the sound sources are held stationary while the users head is moving, simulating listening experiences in the real world. This allows the user to experience theater-like sound through headphones or earbuds when watching movies, playing video games or listening to music, podcasts or conference calls.

By integrating VisiSonics’ spatial audio software with CEVA’s MotionEngine™ sensor fusion software, RealSpace provides a complete spatial audio solution with dynamic head tracking. This combination can be implemented directly on headphones or earbuds, as it is available as an embedded library for use on wireless audio SoCs, and is already ported to both DSPs and Arm Cortex-M class MCUs. This gives OEMs and semiconductor companies a seamless and simple way of incorporating high performance spatial audio with precise head tracking in their products, with impressively low power and minimal system requirements.

Ramani Duraiswami, founder and Chief Executive Officer of VisiSonics, stated: “For more than a decade, we have pushed the boundaries of innovation in the areas of capture, rendering, and personalizing spatial audio to create our spatial audio software. Now as spatial audio is set to become mainstream, CEVA is the right company to take our technology forward and fully exploit its potential through their global presence and synergistic technologies, and with key partners like THX Ltd.”

VisiSonics’ partner THX®, the world-class audio and video certification and technology company has utilized this technology in its tools for game developers and music producers, and in advanced THX audio personalization for headset manufacturers. Also, CEVA and VisiSonics collaborated to bring the complete RealSpace spatial audio solution to boAt, India’s #1 hearable and wearables company, as they announced their first spatial audio headphones with dynamic head tracking – boAt Nirvana Eutopia – earlier this year.

Chief Executive Officer of THX Ltd, Jason Fiber added: “We have enjoyed a successful relationship with the VisiSonics 3D spatial audio team, incorporating their leading-edge software into our spatial audio solutions for gaming, music, and hearables. CEVA is an ideal company to work with THX to take spatial audio solutions to the next level given its synergistic voice, audio and sensor fusion solutions and embedded systems expertise. I look forward to working with CEVA to create further enhanced entertainment experiences for our customers.”

For more information on CEVA’s RealSpace spatial audio solution, visit www.ceva-dsp.com/product/ceva-realspace. For further information about THX, please visit THX.com.

Forward Looking Statements

This press release contains forward-looking statements that involve risks and uncertainties, as well as assumptions that if they materialize or prove incorrect, could cause the results of CEVA to differ materially from those expressed or implied by such forward-looking statements and assumptions. Forward-looking statements include statements regarding the impact of and potential benefits of the ViviSonics 3D Spatial Audio acquisition, market trends related to 3D audio, and potential opportunities for expanding CEVA’s relationship with ViviSonics. The risks, uncertainties and assumptions that could cause differing CEVA results include: any difficulty associated with integrating the 3D Spatial Audio team and technology into CEVA’s existing business; the scope and duration of the COVID-19 pandemic; the extent and length of the restrictions associated with the COVID-19 pandemic and the impact on customers, consumer demand and the global economy generally; the ability of CEVA DSP cores and other technologies to continue to be strong growth drivers for us; our success in penetrating new markets, including in the base station and IoT markets, and maintaining our market position in existing markets; our ability to diversify the company’s royalty streams, the ability of products incorporating our technologies to achieve market acceptance, the maturation of the connectivity, IoT and 5G markets, the effect of intense industry competition and consolidation, global chip market trends, including supply chain issues as a result of COVID-19 and other factors, the possibility that markets for CEVA’s technologies may not develop as expected or that products incorporating our technologies do not achieve market acceptance; our ability to timely and successfully develop and introduce new technologies; our ability to successfully integrate Intrinsix into our business; and general market conditions and other risks relating to our business, including, but not limited to, those that are described from time to time in our SEC filings. CEVA assumes no obligation to update any forward-looking statements or information, which speak as of their respective dates.

About CEVA, Inc.

CEVA is the leading licensor of wireless connectivity and smart sensing technologies and custom SoC solutions for a smarter, safer, connected world. We provide Digital Signal Processors, AI engines, wireless platforms, cryptography cores and complementary embedded software for sensor fusion, image enhancement, computer vision, spatial audio, voice input and artificial intelligence. These technologies are offered in combination with our Intrinsix IP integration services, helping our customers address their most complex and time-critical integrated circuit design projects. Leveraging our technologies and chip design skills, many of the world’s leading semiconductors, system companies and OEMs create power-efficient, intelligent, secure and connected devices for a range of end markets, including mobile, consumer, automotive, robotics, industrial, aerospace & defense and IoT.

Our DSP-based solutions include platforms for 5G baseband processing in mobile, IoT and infrastructure, advanced imaging and computer vision for any camera-enabled device, audio/voice/speech and ultra-low-power always-on/sensing applications for multiple IoT markets. For motion sensing solutions, our Hillcrest Labs sensor processing technologies provide a broad range of sensor fusion software and inertial measurement unit (“IMU”) solutions for markets including hearables, wearables, AR/VR, PC, robotics, remote controls and IoT. For wireless IoT, our platforms for Bluetooth connectivity (low energy and dual mode), Wi-Fi 4/5/6 (802.11n/ac/ax), Ultra-wideband (UWB), NB-IoT and GNSS are the most broadly licensed connectivity platforms in the industry.